By Anshul Gupta

We are in the age of ‘lean’. Flexibility & agility are more suitable from a custom offering standpoint. Whether within products, business models, processes or as a lifestyle, the world is adopting an asset-lite or lean paradigm. Consumers wish to have fewer devices which cater to all their needs – calling, photography, health monitoring, payments, reading, emailing, gaming, video streaming of media content and many others. As all these applications are present simultaneously on one platform, the whole play now revolves around on-device optimization – incremental innovations on speed, seamlessness, power & battery, visual quality, and user experience. This is where on-device AI comes in.

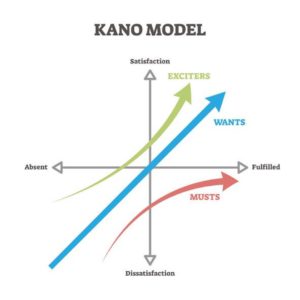

From clock speed of 20MHz to multi-GHz; storage of 3 MBs to 100s of GBs; cameras of 0.5 MP to 48MP ; single core to octa core – today’s consumer devices have outclassed predecessors by a huge margin. The Kano model describes these technological evolutions. It helps identify those product features which are either “must-haves” (basic features) or “wants” (expected features) or “exciters” (augmented features). Any technological leap comes as an exciter and eventually becomes a must have as it commoditizes over time. E.g. camera in smartphones, which was once a novelty is now a must-have. However, the story does not end at that! A new feature soon enters where companies innovate to excite consumers.

Currently, this new exciter is on-device AI. With an installed base of over 4 billion mobile devices as of 2020, almost every person in the world has a phone today, primarily a smartphone. In addition, higher capability with more powerful hardware, intuitive software, efficient architecture & seamless connectivity at faster internet speeds make mobile phones, ideal candidates for edge AI applications.

Let us understand the evolution of AI & mobile phones, & what their fusion has brought to the world!

Evolution of AI

Industry 4.0 is a paradigm shift for both industries & technology. It is the concurrence of processes, systems, IOT, cloud, big data, XR and humans. This resulted in Intelligent applications such as automation of manufacturing processes, virtual trainings & maintenance, optimized fleet management etc. As they generated more data alongside evolving intelligent algorithms, AI based applications became ubiquitous across industries. In recent years, on-device AI became one such area that enabled smart applications for real-time processing of any data, & also provide decision making insights on devices such as smartphones, laptops, smartwatches, and televisions. E.g. super-resolution is a deep learning AI technique that enhances the quality of an image from low to high.

Global mobile market

The mobile market is fragmented into 6000+ smartphones from low range to premium devices, from a business outlook. While the large players cater to major chunk of this market, the rest is highly fragmented. Top 5 OEMs – Samsung, Huawei, Apple, Xiaomi, Oppo cater to 68% on installed base i.e. phones in use by people; top 4 chipset manufacturers – Qualcomm, Hisilicon, Apple & Samsung cover 80% of the smartphone chipsets in the world; and top 3 GPU manufacturers – ARM Mali, Qualcomm Adreno and PowerVR cover 90% of GPUs in smartphones. Over time, the above & few other players have ventured into other video streaming devices, or signed partnerships to create unique AI capabilities. They are now competing for immediate responsiveness, high reliability, data security & energy efficiency.

From a technology perspective, while the hardware front is witnessing new forms of chip designs and architecture, the software is leveraging their capabilities to create increasingly intelligent applications. Mobile OEMs & chipset manufacturers are aggressively incorporating advanced hardware configurations e.g. 5 nm ARM-based chipset designs which facilitate superfast operations (several TOPS – Trillion or tera operations per second); AI accelerators such as NPUs (Neural Processing Units) which have enabled applications like advanced face recognition & authentication, voice assistance, camera recommendations for photography, AR for Animoji and others, using lower power & resources with faster machine learning operations. Different chipset manufacturers and phone OEMs call these accelerators with different names like TPU, NNP and many more.

Hence, the emergence of on-device AI and enhanced capabilities of smartphones have created new venues for AI based edge applications.

The next billion users have spurred the emergence & growth of startups wanting to solve the existing issues and build products for the increasing adoption of internet and digital technology. Over 1300 tech-startups were added only in 2019 in India, and 18% of total technology startups are leveraging deep-tech. However, with Industrial 4.0 & an AI boom catering to increasing needs, ‘digital pollution’ is also likely to surge. Video data streamed through clouds entails a lot of data required for storage and transmission. The cloud infrastructure consumes vast amounts of energy for processing such data and add to carbon emissions. E.g., A 30-min HD video releases CO2 equivalent to driving a car for 6 KMs and in 2019 video streaming produced emissions equal to the carbon footprint of Spain.

A way to reduce this load on the cloud is to democratize the processing to the last mile. Edge AI solutions allow many processes to be performed on the user device, thereby reducing several operations on the centralized cloud.

Fovea Stream

One such example of on-device AI is Myelin Foundry’s product – Fovea Stream. Fovea Stream aims to reduce the data used for streaming video content by OTT players and data consumed by end users used to view different videos by upscaling the quality of an input SD resolution to HD quality output on the edge device. Videos streamed by OTT players in SD quality are small & consume lower bandwidth. For a bandwidth-stricken country, it allows end users not just get access to good quality video content, but also have a quality experience. From movies to sports to news to educational courses, video streaming is everywhere & is significantly consumed. As much as 58% of internet is just video. With such volume, it is estimated that reducing the data in this way can ideally reduce the carbon footprint of video streaming by up to 40%. What would happen if all AI could lead in this direction?